Spatial Transformation by Pixel Shader

As reported in a previous blog, best animation performance in Silverlight 4 is obtained from the combination of procedural animation and a pixel shader. A pixel shader is meant to be used for the manipulation of colors and transparency, resulting also in lightning and structural effects that can be expressed pixel wise.

A pixel shader is not really meant to be used for spatial manipulation – spatial information is hard to come by, but see e.g. this discussion on the ShaderEffect.DdxUvDdyUvRegisterIndex property. Vertex shaders and geometry shaders exist to filter, scale, translate, rotate and filter vertices and topological primitives respectively. Another limitation of the use of shaders in Silverlight 4 is that only model 2 pixel shaders can be used, so that only 64 arithmetic slots are available. Finally, you can apply only one pixel shader to a UIElement.

In order to explore the limitations on spatial manipulation and the number of arithmetic slots, I decided to build a Silverlight 4 application that does some spatial manipulation of a playing video by means of procedural animation and a pixel shader. Well, this is also my first encounter with pixel shaders, so I gathered that a real challenge would show me many sides of pixel shaders (and indeed, no disappointments here).

The manipulation consists of two steps. First the video surface is reduced in size in order to create some space for the second step. In the second step, the video is divided up in a (large) number of rectangles which drift apart – disperse, while reducing in size. The second step is animated.

To be frank, I’m not very excited about the results so far. It definitely needs improvement, but I need some time and a well defined starting point for further elaboration.

The completed application

You can explore the completed application, called ‘Dispersion Demo’ in my App Shop. The application is controlled by 4 parameters:

- Initial Reduction. This parameter reduces the video surface. The higher the value, the greater the reduction.

- Dispersion. The Dispersion parameter controls the amount of dispersion of the blocks. If you set both Initial Reduction and Dispersion to 1, you can see the untouched video.

- Resolution. The Resolution is a measure for the number of blocks along both the X-axis, and the Y-axis.

- Duration. Controls the duration of the dispersion animation.

The application also has three buttons to Start, Stop, and Pause the animation of the Dispersion. The idea is that you first select an initial Reduction and a Resolution and then click Start to animate the Dispersion.

The video used in the application is a fragment of “Big Buck Bunny”, an open source animated video, made using only open source software tools (and an astonishing amount of talent).

Performance statistics

The use of some procedural animation and the pixel shader results on my pc in a frame rate of about 198 FPS, and a footprint of about 2Mb video memory. This is good (though ~400FPS would be exciting), and leaves plenty of time for other manipulations of the video image.

The pixel shader

The pixel shader was created using Shazzam, an absolutely fabulous tool, and perfect for the job. The pixel shader shown below centers the image or video snapshot and reduces it. Then it enlarges it again according to the Dispersion factor. The farther away from the center, the larger the gaps are between the blocks displaying video, and the smaller the blocks themselves are.

sampler2D input : register(s0);

// new HLSL shader

/// <summary>Reduces image to starting point.</summary>

/// <minValue>1.0</minValue>

/// <maxValue>20.0</maxValue>

/// <defaultValue>10.0</defaultValue>

float Scale : register(C0);

/// <summary>Expands / enlarges the image.</summary>

/// <minValue>1</minValue>

/// <maxValue>20</maxValue>

/// <defaultValue>6</defaultValue>

float Dispersion : register(C1); //Dispersion should never get bigger than Scale!!!

/// <summary>Measure for number of blocks</summary>

/// <minValue>100</minValue>

/// <maxValue>800</maxValue>

/// <defaultValue>200</defaultValue>

float Resolution : register(C2);

float4 main(float2 uv : TEXCOORD) : COLOR

{

float ratio = Dispersion / Scale;

float offset = (1 - ratio) / 2;

float cutoff = 1 - offset;

float center = 0.5;

float4 background = {0.937f, 0.937f, 0.937f, 1.0f}; // Same as demo program's Grid

float2 dispersed = (uv - offset) / ratio;

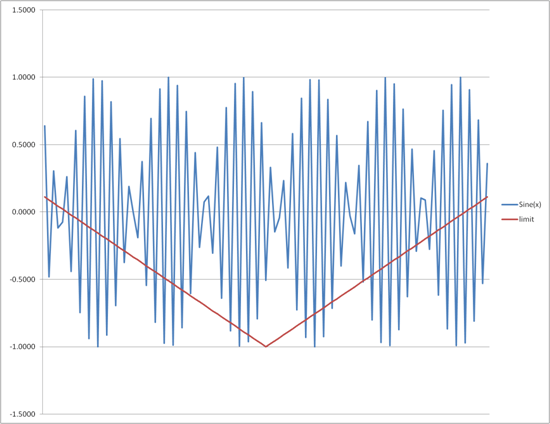

float2 limit = 4 * (abs(uv - center) * (Dispersion - 1) / (Scale - 1)) - 1;

if(uv.x >= offset && uv.x <= cutoff && uv.y >= offset && uv.y <= cutoff)

{

if (sin(dispersed.x * Resolution) > limit.x &&

sin(dispersed.y * Resolution) > limit.y)

{

return tex2D(input, dispersed);

}

else return background;

}

else return background;

}

In the code above, the outer “if” does the scaling and centering. The sine function and Limit variable take care of the gaps and block size. See the graph below, the Limit rises to cut off increasingly many values of the sine, thus creating smaller blocks and larger gaps.

Evaluation of limitations

Although this is a solution for the challenge set, it is not the solution. This solution uses scaling for dispersion, but what you really would like to have is a translation per block of pixels that disperse. Needing this reveals another limitation: in Silverlight 4 you cannot provide your pixel shader with an array of structs that define your blocks layout, hence it is very hard to identify the block a certain pixel belongs to, and thus what its translation vector is.

At this point I had the idea to code the block layout into a texture which is made available to the pixel shader (you can have up to three extra textures (PNG images) in your pixel shader. To create such a lookup map you have to create a writeable bitmap with the values you want, then convert this to a PNG image as Andy Beaulieu shows, using Joe Stegman’s PNG encoder. The hard part will be the encoding (and decoding in the shader). You have at your disposal 4 unsigned bytes, and you will have to encode 2D translation vectors that also consist of negative numbers. The encoding / decoding will involve a signed – unsigned conversion, and the encoding shorts (16 bit integers) as 2 bytes. This will, of course, be a feasible but tedious chore.

A better move would be to invest some effort in getting acquainted with Silverlight 5 Beta’s integration with XNA, by which also vertex shaders come available.

As far as the limit of 64 arithmetic slots concern, it seems that one absolutely must hit it. But then, it always seems possible to rethink and simplify your code, thus reducing its size. This limitation turned out not very prohibitive.

Procedural animation

The above pixel shader is integrated in the Silverlight application using the C# code generated by Shazzam. Then when implementing procedural animation, another limitation came up concerning manipulating videos.

The initial plan was to display the video using a collection of WriteableBitmaps that could be manipulated using the high performance extensions from the WriteableBitmapEx library. However, it turns out that to take a snapshot from a MediaElement, you have to create a new WriteableBitmap per snapshot. This is expensive since we now have to create at least about 30 WriteableBitmaps per second. Indeed, running the Dispersion application (at 198 FPS) sets the CPU load to about 50% at my pc. This is not really what we are looking for.

A way out is to implement the abstract MediaStreamSource class, both for the vision part as well as for the sound part of the video to be exposed. By the looks of it, this seems to be quite a challenge. However, Pete Brown has provided examples for both video and sound. And there is also the CodePlex project that provides the ManagedMediaHelpers. So, we add this challenge to the list.

Another experiment might be to see if the XNA sound and video facilities can be accessed when exploiting the Silverlight – XNA integration in Silverlight 5.

Conclusions

The challenge set turned out to be very instructive. I’ve learned a lot about writing pixel shaders, though there will be much more to learn. No doubt about that.

It is absolutely true that spatial manipulation is hard in pixel shaders :-), the main cause being the extraordinary effort required to provide the shader with sufficient data, or sufficiently elaborate data. The current shader needs improvement, the next challenge in writing shaders will address Silverlight 5 (beta) and XNA.

I noticed that you can achieve a lot in pixel shaders using trigonometry, and (no doubt) the math of signals.

Finally, in order to really do procedural animation on surfaces playing parts of a video, you seem to need to either implement MediaStreamSource or get use the XNA media facilities.

[…] Marc Drossaers The Web, Software, User Interfaces Skip to content HomeAbout ← Spatial transformation by pixel shader […]

[…] data exchange is possible. The question is then, “how far can we push pixel shaders model 2”. Another previous blog post discussed a preliminary version of dispersion. This post is about Expansion. The effect is much […]