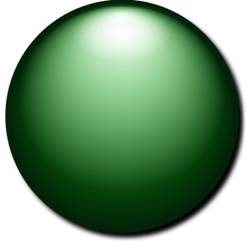

Glossy Button

The MS Expression Blend tutorials contain a tutorial on creating a glossy button. Well, I like shiny things, so I got into it. The tutorial is very practically oriented, so in this blog post we’ll start with some remarks on lighting practices that might create the illusion of a shiny object on your screen.

Lighting

With respect to lighting concepts, I will somewhat follow Frank D. Luna here. Factors at play in lighting are directional and wavelength attributes of the light(s) involved and reflective properties of the material the light.

Light

For people, colored light is a compound of Red, Green and Blue light in varying intensities. The relative intensities of these components define the colors we know.

Ambient lighting is lighting of an object by indirect light, light that has been reflected multiple times, and thus has no specific source. In Ambient lighting models, neither the position of the observer nor the position of the light source plays a role. You might think of ambient light as showing the color of an object (dependent on the color of the light, of course).

Diffuse lighting of an object describes the scattering reflections of light coming from a specific source. The smoother the surface, the less diffuse its reflection, the shinier it looks. In Diffuse lighting models, the position of the light source, but not the position of the observer is defined. We could say that diffuse lighting creates a gloss on smooth surfaces.

Specular lighting of an object involves directed light that reflects (mostly) in a specific direction – ‘a cone of reflection’. In Specular lighting both the position of the light source and the observer is relevant – the observer might not see the specular reflection. We might say that specular lighting creates the shine on a smooth surface.

Light sources come in three flavors: parallel light sources, like the sun; point light sources, like a light bulb, and spotlights, like a flashlight. In 3D models, parallel light has a direction but no source location; light from a point source has a source position and an

intensity that decreases quadratically with distance from the source. It lights objects in all directions. Spotlights have a source position, a specific direction and also attenuation. According to Lambert’s Cosine Law, reflected light intensity depends as the cosine function on the angle at which the light hits a surface, so perceived reflected light intensity depends on both the angle and the distance.

Material

Material properties define which wavelengths will be absorbed and which will be reflected (and to what extent). This defines the color and smoothness properties. Rough material will reflect light diffusely in all directions. If subjected to a strong light source, it will glow brightly, but will have hardly any gloss, and no shine. Conversely a smooth object will have a clear gloss and almost act as a mirror for the specular light (for an observer in an adequate position).

Modeling lighting of a glossy button

In a DirectX or XNA application you can model lighting extensively, and the result will be quite realistic. This realism comes at the price of significant resources, which cannot be spent on ‘just’ a button, so the same effects will have to be simulated by other means. In this section we will first analyze the construction of the glossy button from the tutorial, and then add some more features – for fun and enlightenment.

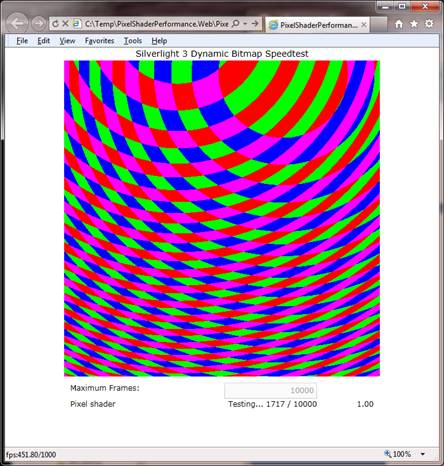

The Glossy Button Tutorial starts out with an ellipse, the button, that has a gradient color (and a robust edge). The gradient thus covers both ambient and diffuse lighting. A second layer, the gloss, consists of an ellipse that has a white gradient color, running into transparency. It also has a mild blur effect. This ellipse covers about two thirds of the button. The final layer, the shine, is a third ellipse, a much smaller one that also has a gradient white color, moving into transparency. The result is pretty cool, isn’t it?

Had I already mentioned the drop shadow (bottom right)?

Well, although pretty cool, one may have some disturbing questions, like: ‘What exactly is the shape of this button?’, or, ‘Where does the light come from?’ The shape cannot be a sphere, for that the gloss extends too far to the bottom, or alternatively, the shine is too close to the top. Also, it is weird that the drop shadow is bottom right, while the shine is in the middle. I also do not think that this button is very shiny, it doesn’t look it has a top layer of glass, like really shiny things do (I will get into this really shiny stuff in another blog post). Finally, it is strange that the rim has the same color all around the button. So, the conclusion is that the ingredients may be there, but the recipe isn’t quite right.

I have tried to improve a bit on these shortcomings but in the eyes of the reader it might just as well have become worse, so read on ;- ).

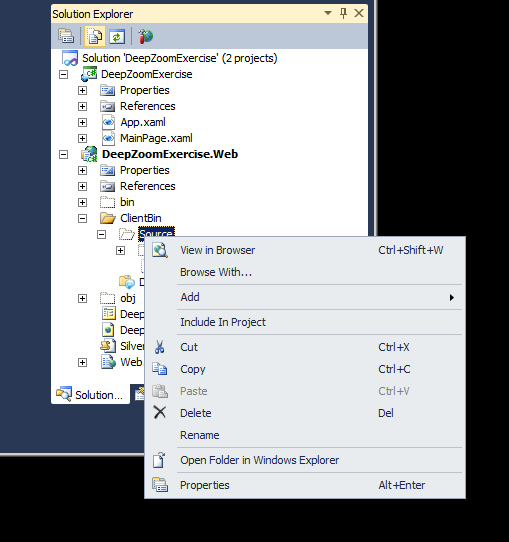

The glossy button demo application

The demo application has a number of additional features, the simplest being a text on the button. Also, the size of the button is rather large here, but it can be set differently using Width and Height dependency properties without adversely affecting the visual properties or behavior of the control.

Color picker

The user can pick a color for the button using the color picker. The color you select is the bright color in the gradient. The application adds the dark end of the gradient itself. The rim is also set to the dark color derived from the selected color. The color picker control is part of the CodePlex Silverlight Contrib project.

Follow the mouse pointer

Although I’m not a fan of things on your desktop that follow your mouse pointer, I did provide the option here. It was either follow the mouse pointer or develop a kind of jog/shuttle control to move the three gradients over the surface of the button, and this is quicker. So what you see is that within a certain range around the button, the button gradient, the gloss and the shine all follow the mouse pointer. The button does seem to a spherical shape when you move around.

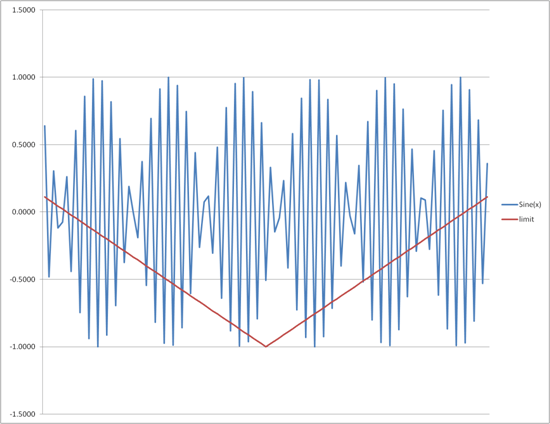

Proximity color effect

A more experimental addition is that when the mouse pointer comes close to the button, light intensity increases. This means also that for some lighter colors, the color changes if the mouse pointer is over the button. This would agree with the common experience that harsh white light dims or removes color.

Mouse Pressed visual state

When the left mouse button is pressed, a simple animation increases the size of the gloss and shine.

Concluding remarks

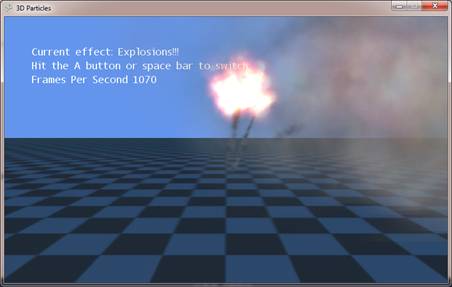

After some pondering about some nagging dissatisfaction with the results I realized that a really shiny button also reflects the objects nearby. Reflection is what really makes the difference. So, if you really want to have shiny buttons, you are in for true 3D models in you user experience. Although that seems a far cry, the general use of 3D modeled user interfaces seems to gain momentum now. Just note tendencies like the use of the Kinect, the integration of Silverlight and XNA, the use of 3D in CSS3 and Html5, and, of course 3D video – without glasses even.

On the other hand, shiny buttons that do not reflect are already part of the Apple UX. In that case you see that the buttons are more like colored glass. These subjects, glassy buttons, and 3D user interfaces, will be subjects of upcoming blog posts.