A Windows Port of the 3D Scan 2.0 Framework

The 3D Scan 2.0 Framework by the Chair for Virtual Reality and Multimedia of the Computer Science Institute, TU Freiberg. Is a software framework that uses the Microsoft Kinect to create 3D scans. The software is based on a number of well known open source frameworks:

Open Kinect, OpenSceneGraph, the ARToolkit, and VCGLib. Oddly enough, the 3D Scan 2.0 Framework is written in C++ and available for Linux only. Oddly, since the kinect is a Microsoft product, and all above supporting frameworks have a Windows version as well. Reason to try and port the framework to Windows.

Downloading and Building the Supporting Frameworks

The Windows ports of the supporting libraries are of high quality and present no problems.

For OpenKinect you just follow the instructions on the Getting Started page. These instructions will let you download and use tools like Cmake-Gui, that translates files from the Linux build system into Visual Studio solutions and projects; Tortoise, a Subversion client; 7Zip and Notepad++. This latter tool knows how to handle the Linux / Unix vs. Windows LF/CR issues better than the standard Windows tools, which provides for much more comfortable consumption of readme-s.

Part of OpenKinect for Windows is libusb10emu. The 3D Scan Framework also needs it. So, the sources and headers files will have to be added to the SciVi solution, see below.

The instructions let you download and install libusb-win32, pthreads-win32, and Glut. All provide binaries and include files. Other supporting frameworks use these as well. After working my way through the instructions, OpenKinect works like a charm.

OpenSceneGraph provide the source code, but you can download nightly release and debug builds for Windows from AlphaPixel, also for Visual Studio 2010; both 32 and 64 bits versions. The test programs than usually run fine (there are many, and some just don’t run at once).

VCGLib just needs to be downloaded, no building required.

The ARToolkit does require building. In order to build it with Visual Studio 2010, just convert the solution and build it (a few times; the order of projects is not such that a single build will do). After building a number of test applications will run nicely.

Building the 3D Scan 2.0 Framework

To build the 3D Scan 2.0 Framework you have to create your own Visual Studio 2010 solution. This solution has three projects: the Poisson lib, the Poisson surface construction algorithm; libusb10emu, emulates the libusb1.0 library – usb aspects available for Linux but not for windows; and Scivi, the scanner, the generator and test & benchmark.

Building the solution brought few strange errors like the use of “and” instead of && in the code, but no big issues.

Building the 3D Scan 2.0 Framework

– Revolves around libusb10emu

The Test Applications

– The framework contains a number of test applications: testOsg; testKinect; test; testColor; and testPoisson .

The testOsg Application

Worked immediately, without a problem, see image below.

Shrinking/ expanding a cube is by rolling the scroll button after clicking the cube involved.

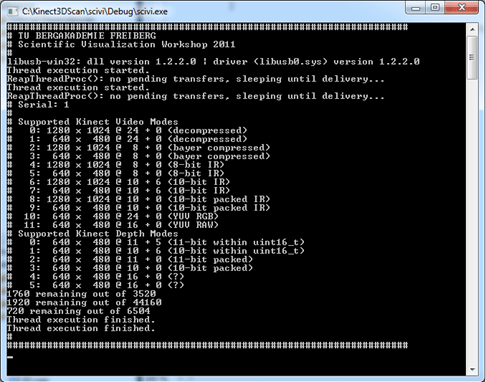

The testKinect Application

This is a relatively simple application that reads the serial number from the kinect, and queries and displays its video modes, both color and depth. At this point we ran into some hard problems with the libusb10emu lib. The application presumes an initialized usb device here, that has a ‘context’ and a mutex. However, there is no initialized libusbcontext, and there is not going to be one either. This results in the application using a non-existing mutex, and Bingo!

On the other hand, if we skip the code that digs up the serial number and just provide a mock-up (I chose ‘1’), the application works fine.

The testKinect Application

The central test application. Also digs up the serial number of the kinect. It requires the serial number in order to load and store calibration data. So, I just made up a mock file name in the code: “test”, and skipped querying the serial number. Then the application works fine. It even reeds calibration data, and does some calibrating, after renaming a designated file to “test.yml.

If you look at the picture of me (yes, that’s me) made using the Test application, and compare it to the images in the calibration section of the documentation, you would conclude that the calibration is sufficient, if compared to the image with the uncalibrated kinect.

The code also contains undocumented “I” and “k” control options that rise and lower an AR threshold. I don’t know what that does. There is also an “s” control button. It provides statistics overlays. Push it three times for increasingly more information, and a fourth time to remove all overlays again.

A quirk of this application is that it randomly portrays it subject mirrored or not. One time you’ll see the image presented correctly, another run the scene is presented mirrored.

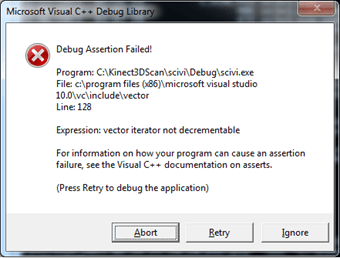

The Poisson Benchmark and testPoisson Applications

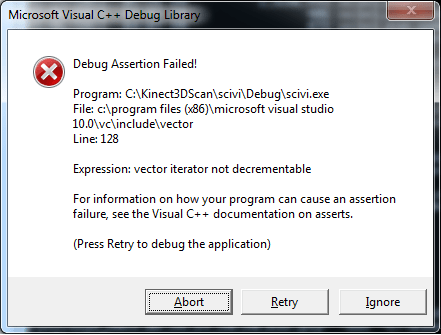

After adjustment of the hard coded files paths, the program gets to work as seems to be its design. It loads the vertex file (phone), counts the vertices (80870), and starts to remove duplicates. Then after a short while the application throws an exception.

The same holds for the testPoisson application. The exception is generated in code for a not very accessible algorithm that removes duplicate vertices. I don’t know why the application throws an exception, but it does both decrements an iterator (also changes its values otherwise) and erases elements from the vector. Most probably the algorithm needs some specific parameters to operate correctly.

The testColor Application

Couldn’t be run because the required data isn’t available for download at the project’s web site.

The Scan and Generate Applications

The scan applications generates a point cloud with data from the kinect, the Generate application creates a mesh from the point cloud.

Scan

The scan application required an adaptation in the OSG lib. The Kinect delivers RGB color data, not RGBA color data, so the assumptions in the code had to be adapted accordingly.

I made a scan of our teapot. With some imagination you can discern the teapot from the point cloud, but it is not very convincing. Obviously, some extra parameter tuning needs to be done to obtain result that match the examples from the project site in quality, although I don’t know how.

Generate

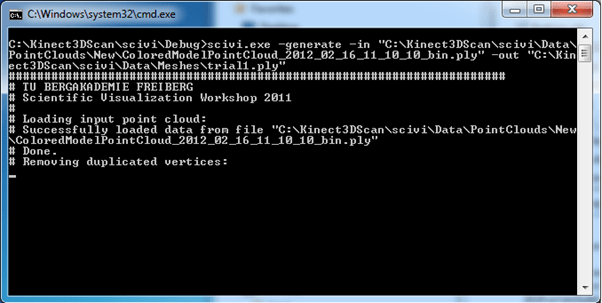

The generate application loaded the point cloud file successfully

and ran into the above mentioned error when removing duplicates.

Conclusion

I think the idea of using the Kinect for 3D scanning is fabulous. However, it turns out that it is not straightforward to use the software. A number of questions and problems arise, mainly concerning removing duplicate vertices and tuning the scan mechanism (calibration?). Perhaps the members of the 3D scan 2.0 Framework team can help out. If so , more result will follow on this blog.

![clip_image002[7] clip_image002[7]](https://thebytekitchen.com/wp-content/uploads/2012/01/clip_image0027_thumb.jpg?w=543&h=426)