Harder to C++: Monads for Mortals [4], References and Move Semantics

In part 3 we have seen a simple monad with delayed evaluation. Until we find a use for delayed evaluation that adds value to mainstream imperative practices, we will focus on the eager or strict evaluating monad. Until the shootouts, for sure.

In part 2 we have seen a simple implementation of the eager monad. A drawback of that specific implementation is that it copies its value around, which will lead to poor performance in case of large data structures.

In this part we will examine an improved implementation that has the same time performance for any data size (that fits in RAM memory). We will improve the design of part 1 by using references and move semantics. To me, a good read on move semantics seems this article on StackOverflow.

Of course, we want to see the move semantics in action, so we can be sure the presented code indeed uses move semantics and references, hence is a performant solution. To be able to trace how an object, representing the monad value is moved around, we construct a class that will serve as the value, and which contains all necessary constructors, destructors and assignment operators, each fitted with an output statement reporting its execution.

We will add the same trace instruments to the monad template,. This requires that the unit function is integrated into the monad template in the form of various constructors (hence destructors) and assignment operators. To integrate the unit function into the monad template this way is not different from the practice Eric Lippert exercises in his tutorial on monads. There will be no use of a so called swap function in applicable constructors and assignment operators; that would again merge what we are trying to separate here.

Implementation of a Monad with References and Move Semantics

The Value Class

We will use the following class as the value in the monad to evaluate the use of refs and move semantics.

The constructors, destructor and assignment operators are all textbook examples. The class has a payload which we will use in performance measurements. It is a char array of size sz. The ValueObject class has a method (at the bottom) that returns size sz.

class ValueObject

{

unsigned int sz;

char* payload;

public:

// default

ValueObject();

// Ref argument constructor

ValueObject(const int& i);

// Copy constructor

ValueObject(const ValueObject& v);

// Move constructor

ValueObject(ValueObject&& v);

// Destructor

~ValueObject();

// copy assignment

ValueObject& operator=(const ValueObject& v);

// move assignment

ValueObject& operator=(ValueObject&& v);

unsigned int size() const;

};

Each constructor, destructor and assignment operator writes a line to the console to mark that it has been called.

To comfortably output the trace we use an output operator overload:

ostream& operator<<(ostream& os, const ValueObject& v)

{

os << v.size();

return os;

}

We test the ValueObject class with code that exercises all methods above.

int _tmain(int argc, _TCHAR* argv[])

{

SetConsoleTitle(L"Harder to C++: Monads [4]");

{

ValueObject v1;

ValueObject v2(10);

ValueObject v3(v2);

ValueObject v4(std::move(v3));

v4 = v1;

v2 = std::move(v1);

}

// The destructors get called at the end of the scope

cin.get();

return 0;

}

Which results in the following output.

The Monad Template

The monad type constructor does double duty as a unit function, with a broad spectrum of constructors. Below you will find the declarations in the template definition.

template<typename V> struct monad

{

// Wrapped value

V value;

// std::move is here to coerce the use of v's move constructor, if any

// The parameter V& is *not* const. Const coerces the use of V's copy

// constructor

monad(V& v); // : value(std::move(v))

// std::move is here to coerce the use of v's move constructor, if any

monad(V&& v); // : value(std::move(v))

// copy constructor

monad(const monad& m);

// move constructor

monad(monad&& m); // : value(std::move(m.value))

//destructor

~monad();

// copy assignment

monad& operator=(const monad& m);

// move assignment

monad& operator=(monad&& m);

};

Most implementations are standard. Note that there is no parameterless default constructor. The signature of the first constructor is special in that one would expect a const V& parameter, like so

monad(const V& v) : value(std::move(v))

But doing that would coerce the use of the ValueObject’s copy constructor instead of the desired move constructor; the copy constructor has a const parameter, whereas the move constructor has a non-const parameter.

As with the ValueObject class, each method writes a line to the console to mark that it has been executed.

We test the monad template with code that exercises all methods above.

int _tmain(int argc, _TCHAR* argv[])

{

SetConsoleTitle(L"Harder to C++: Monads [4]");

{

int i = 11;

monad<int> m1(i);

monad<int> m2(10);

monad<int> m3(m2);

monad<int> m4 = std::move(m2);

m4 = m3;

m4 = std::move(m1);

}

// The destructors get called at the end of the scope

cin.get();

return 0;

}

Which results in the following output.

Monad Bind Function

The Bind function has not really changed, compared to previous versions, but it does report on its use now.

// Bitwise OR operator overload: bind function

template<typename A, typename R>

monad<R> operator| (const monad<A>& mnd, monad<R>(*func)(const A&))

{

cout << line++ << "\t" << "---Function bind" << endl;

return func(mnd.value);

}

For use with the ValueObject, we will add two example functions. In analogy to previous installments, the first function divides the size of the payload by 2, the second function tells us if the size is even.

// divides arg by 2 and returns result as monadic template

monad<ValueObject> divideby2(const ValueObject& v)

{

cout << line++ << "\t" << "---Function divideby2" << endl;

return monad<ValueObject>(ValueObject(v.size() / 2));

}

// checks if arg is even and returns result as monadic template

monad<bool> even(const ValueObject& v)

{

cout << line++ << "\t" << "Function even" << endl;

return monad<bool>(v.size() % 2 == 0);

}

Tracing Moves

We are now in a position to trace the application of functions by the monad, and verify that indeed only references and move semantics are applied. We do this with the following code.

int _tmain(int argc, _TCHAR* argv[])

{

SetConsoleTitle(L"Harder to C++: Monads [4]");

{

cout << line++ << "\t" << "---Object creation" << endl;

ValueObject v1(22);

monad<ValueObject> m1(v1);

cout << line++ << "\t" << "---Creation of m2" << endl;

auto m2 = m1 | divideby2 | even;

cout << line++ << "\t" << boolalpha << m2.value << endl;

cout << line++ << "\t" << "---Leaving scope" << endl;

}

// The destructors get called at the end of the scope

cin.get();

return 0;

}

Which results in the following output.

The good news is: the code indeed only uses references and move semantics.

But OMG, What A Shocking Lot Of Function Calls! What a Hydrocephalic Overhead!!!

Look what happens between entering the function divideby2 and the subsequent call to the bind function: No less than 8 calls to constructors and destructors. What a shocking waste of time and effort. To create 2 objects, apply 2 functions, and then clean up the mess, 24 function calls are required. What circumstances could ever justify such an overhead?

But of course, we cannot blame just the monad for this, it is also the move semantics. And that is what really shocks me. How could they do that? Now I understand why the committee is ready to embrace the monad. Who cares about the overhead of the monad if you already have the overhead of move semantics?

Minimal Verification that Time Performance is Independent of Size

Ok, let’s put the drama behind us and see if this solution is indeed size independent. We will compare a payload of size 1 to a payload of 10,000,000,000.

To make the test size independent we will use an identity function. This function does not modify the data, it just moves the data on – Yes it is still immutable data. The identity function will not muddle time measurements with memory (de-)allocations for the actual data, so we will only measure the overhead for the monad and the move semantics.

Time measurements will be made with the high resolution performance timer by Simon Wybranski for a release win32 build.

The identity function looks like this.

monad<ValueObject> identity(const ValueObject& v)

{

return monad<ValueObject>(const_cast<ValueObject&>(v));

}

The test code is as follows.

int _tmain(int argc, _TCHAR* argv[])

{

SetConsoleTitle(L"Harder to C++: Monads [4]");

double total = 0;

for (unsigned int k = 0; k < 10; ++k)

{

//ValueObject v1(1);

ValueObject v1(10000000000);

monad<ValueObject> m1(v1);

double elapsed = 0;

for (unsigned int i = 0; i < 10000; ++i)

{

t.Start();

auto m2 = m1

| identity | identity | identity | identity | identity

| identity | identity | identity | identity | identity

| identity | identity | identity | identity | identity

| identity | identity | identity | identity | identity

| identity | identity | identity | identity | identity

| identity | identity | identity | identity | identity;

t.Stop();

elapsed += t.Elapsed();

}

// The destructors get called at the end of the scope

cout << "Time 30 x 10000 applications: " << elapsed << " ms" << endl;

total += elapsed;

}

cout << "Average time over 30 x 10000 iterations: " << total / 10 << " ms"

<< endl;

cin.get();

return 0;

}

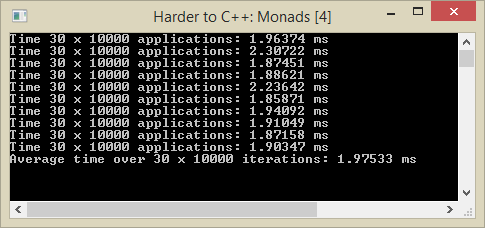

A typical output is

Comparable values (about 2 ms) are indeed obtained for both the large and the small payload.

Pros, Cons, and Questions

So, a strict monad that is based on references and move semantics takes 2 ms to do nothing 30,000 times, independently of size of the data structure 🙂 .

The amount of time per function application is small, so we might conclude that references and move semantics indeed provide an advantage over code that always copies its data structures, as we had in parts 1 and 2.

On the other hand, I find the amount of constructor and destructor calls distressing: returning a monad from a function takes two constructor calls and two destructor calls. Can we do better than that? Is it worth the trouble to try and do better than that?

I think it surely is worth the trouble. There is always the potential comparison between 2 programs that are functionally equivalent, but one program needs only half the instructions the other program needs. The advantages of the smaller program are of great importance. It can run equally fast on much less expensive hardware, it can save 50% battery power compared to the other program. On equal hardware it leaves room for the execution of other instructions that can contribute to a significantly better UX. So, finding the best performance brings you a competitive edge that can make a world of differences in the long run, or at large scale.

Next episode we will investigate if a pointer based monad can do any better than the constructs presented here.